I can’t even fathom how much code is shelled out on a daily basis. Despite the hot market, putting together a team to deliver your company’s next product is not that hard. You find a few knowledgeable people eager to work on a greenfield project, you let them loose, and chances are you’ll have a software product on your hands soon enough.

But it’s not just a builder’s effort.

Once you’ve laid the foundations, you’ve shipped to production, and your clients are using it, you now go into the dreaded maintenance mode. That is the place where brave and bold decisions have to be double and triple-checked. Development slows down, at least a tiny bit, and the software starts to evolve.

Years pass, and the last founding team member has long submitted their notice. The people who maintain the codebase don’t have all the context, but they cut some corners and manage to put more and more functionality into the monolith. Clients are happy, but the technical debt bill piles up on your desk.

Then comes the moment when you hear an engineer bringing up rewriting a part of the application for the first time.

Sooner or later, the maintenance effort you can put into an older system runs its course. After you spend a month putting a minor feature together, you throw in the towel. We’ll rewrite, you say. The business people go over budgets with sweat drops on their foreheads. The engineers roll their sleeves and rejoice. This time it’ll be different.

I found myself in the middle of such an initiative in 2019. We had a large monolithic web application on our hands and one big question - where to start?

The Monolith

There’s nothing wrong with a monolith. I think people look to microservices too often, impatient to fight the problems of distributed systems, while a boring old monolith would do the job. In this case, ours had run its course.

It had grown far beyond anything of reasonable size, and a select few individuals kept the little domain knowledge we had.

The only thing we knew was that the monolith was still running acceptably well, and it was bringing in revenue. So we couldn’t stop the old system and focus on the new one. There were feature requests and critical bugs in the backlog to fix.

We had to find a way to keep the business going and migrate it to a more manageable tech stack in the meantime. My team was far from the first one to have such a problem, so we tried to learn what we could from those before us. There’s a well-known software development pattern called The Strangler Fig.

It describes how a new system can grow around the old one until it’s safe to remove the legacy system out of the way. In our case, we wanted to start extracting microservices from the monolith, modernizing it piece by piece until there’s nothing left from the old system. But the question remained - where to start?

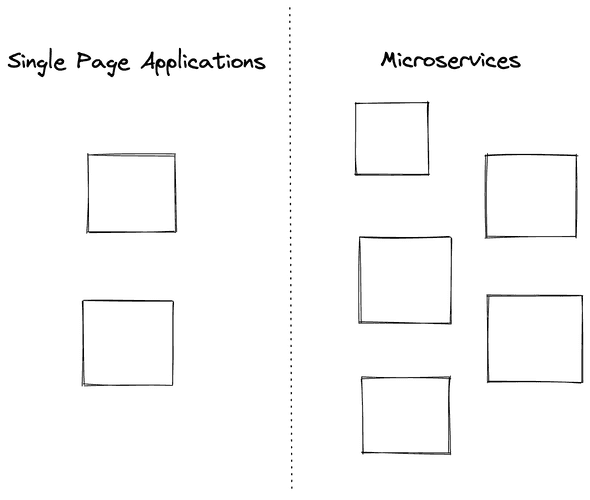

The Microservices

We wanted to create more robust UIs, so we could start by extracting a single-page application. The data was kept in contrast to all proven patterns, so we considered pulling it apart in different stores. Outlining bounded contexts and creating microservices wasn’t a bad idea either.

We had some options, but neither of them was appealing enough. So what end do we grab?

The truth is somewhere in the middle. Literally.

Whatever end we start with, we will end up coupling it to the existing application, leaving traces of the legacy we want to be gone. Extracting a single-page application would mean tying it to the existing data format and making it aware of the API endpoints’ constraints.

That’s something I hate doing - going through a complex process of assembling the data in the UI, coupling it to the complexity of the back-end.

We could start extracting microservices, but then we’d have to comply with the old data format and shape our codebase around a data structure we didn’t like. We’d have to make updates throughout the application each time - changing UI logic and API response objects.

Maybe most people would start from the UI since that’s the place where we’re most likely to reuse logic. After all, we will be using the same language. On the back-end, you may not have that luxury.

All in all, our data layer was messy and hard to understand. A big part of the rewrite was making it more manageable. So why not add a mapping layer, you ask? Exactly. But we decided not to do it on the application level.

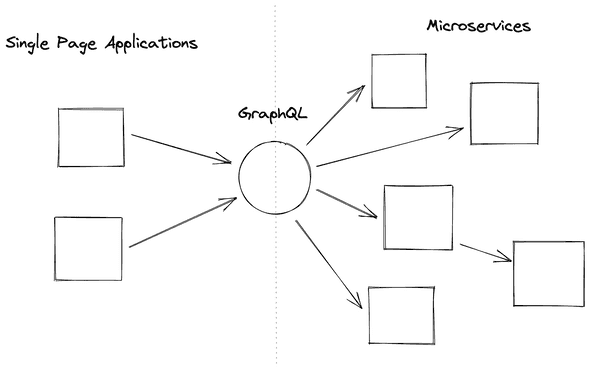

Enter GraphQL

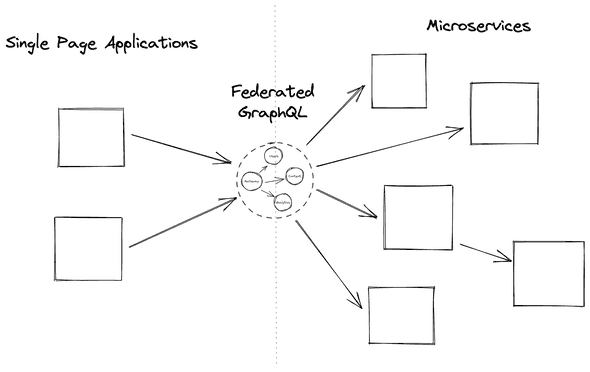

We had put ourselves in a false dilemma - we were observing only two possible choices while there were other excellent solutions. We could put a wedge between the data and the UIs, allowing us to wrangle the payloads in any way we please.

A GraphQL layer in the middle would allow us to have an independent interface, regardless of the existing format. It would be generic and would remove the legacy specifics. The UI could be oblivious of where the data is coming from, that complexity would be kept in GraphQL.

The microservices could expose data in a way suitable without worrying about breaking a contract with multiple other services. Then GraphQL would stitch everything together.

With GraphQL acting like a gateway, a facade in the middle, we have complete control over the schema the UIs see. It could also hide the complexity of fetching data from third-party services - a responsibility often left to the SPAs to manage. A single call from the UI can translate to multiple ones on the other side of the gateway.

We no longer expose endpoints to the UI that can return a fraction of the data. Instead, through GraphQL, we can query the exact data we need down to the field level.

GraphQL Design Specifics

I’m a firm believer in design over configuration. But in this case, it’s better to expose more generic queries and mutations that could expose larger pieces of the GraphQL schema. This gives the front-end applications freedom in deciding what data they want beyond fields and pages.

Strangling away microservices became easier because most of them have only one consumer. GraphQL, in turn, can communicate deprecated queries to its consumers since it has better mechanisms through its decorators.

But by far, the biggest reason to go with this is we could work on the front-end and back-end independently. The UI services have a concrete schema to work against, even if something has to be mocked. Microservices can be extracted independently, and GraphQL can make calls to them, strangling away functionality from the monolith.

We slowly move pieces of logic away from the monolith, passing ownership to smaller and easier to manage services. Step by step, we make the legacy codebase less daunting to decommission. The UIs won’t have to change, for all the demolition work will happen behind GraphQL’s facade.

GraphQL’s Complexity

We’ve outlined this middle layer as a fundamental part of our architecture. But doesn’t it draw too much complexity in it? Does it matter if we make everything else easier if a key component becomes unmanageable?

That is a question of responsibilities, and the gateway layer doesn’t have more than any other service fit for that purpose.

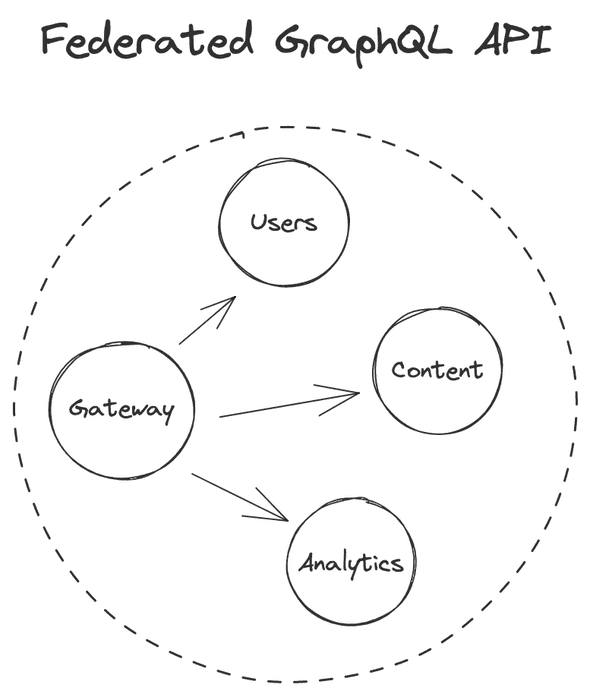

It maps one data format to another, serving as an adapter between the back-end and the front-end. It’s a gateway that fans out one request into multiple others. But there’s another powerful mechanism that many people don’t consider - the GraphQL layer can be federated.

The gateway can be made up of multiple components, splitting the responsibilities between them. A single GraphQL service can deal with content, another for users, and another for analytics. We can then federate them and expose them together through a single entry-point without leaking that they’re multiple services.

Of course, the complexity will grow, but the GraphQL ecosystem has excellent ways of dealing with that. To the front-end, it remains as one cohesive layer. Through federation, we also gain more precise scalability and caching.

That is just a high-level explanation of the idea, and you’d be right to doubt the perfection of the approach.

Chances are you won’t get the schema right the first time, and you will have to make changes across multiple applications. The querying freedom may produce complex queries and fan out too many requests. And the whole layer is another self-managed critical piece of infrastructure whose security you need to think about.

But I’m yet to find a perfect legacy migration approach, and with all trade-offs taken into account - this one was worth it.